Is Your Child’s Data the "Fuel" for AI? What Every Parent Needs to Know Now

If you’re worried that your child’s private thoughts, homework, or personal data are being sucked up into a giant computer "brain" to be sold or misused, you aren't alone. Let’s pull back the curtain on AI privacy and give you the checklist you need to protect your family.

PARENTING

ParentEd AI Academy Staff

4/15/20263 min read

As parents, we’ve finally figured out how to manage screen time and social media. But just as we caught our breath, a new guest arrived at the dinner table: Artificial Intelligence.

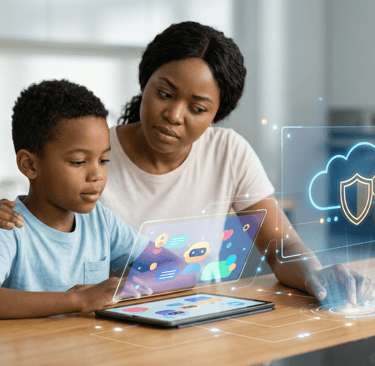

Whether it’s a chatbot helping with a history essay or a new math app that "talks" to your child, AI is everywhere in schools this year. While these tools are exciting, they bring up a scary question that nearly 80% of parents are asking: "Is my child actually safe using this?"

If you’re worried that your child’s private thoughts, homework, or personal data are being sucked up into a giant computer "brain" to be sold or misused, you aren't alone. Let’s pull back the curtain on AI privacy and give you the checklist you need to protect your family.

The Big Concern: Why Parents are Nervous

For years, we’ve been told that "if the product is free, you are the product." In the past, some school apps were caught tracking student locations or selling their browsing habits to advertisers.

With AI, the stakes are higher. Generative AI (like ChatGPT) needs massive amounts of data to learn. Parents are rightfully asking: Is my daughter’s creative writing assignment being used to train a robot? Is my son’s voice recording being saved forever?

The "Privacy Shield": How Your Data is Used

When your child uses an AI tool, their information usually goes into one of two "buckets":

1. The "Training" Bucket (The One to Avoid)

In this scenario, the AI company takes everything your child types and uses it to "teach" the AI how to be smarter. This is a red flag for privacy because once that data is in the system, it’s very hard to get out.

2. The "Private Cloud" Bucket (The One You Want)

Many schools now use "Enterprise" or "Education" versions of AI. In these versions, there is a digital "wall" around your child’s data. The AI uses the information to help your child, but then it’s deleted or kept in a secure vault that the company isn't allowed to touch.

Do AI Apps Collect Student Data?

The short answer is: Yes, but they shouldn't collect more than they need.

Under a federal law called COPPA (Children's Online Privacy Protection Act), apps aren't allowed to collect personal info from kids under 13 without a parent's "okay." In 2026, new state laws in places like California and Idaho are going even further, specifically banning companies from using student data to train AI models.

How to Tell if a Tool is "Safe"

You don’t need to be a computer scientist to vet an app. Just look for these three "Green Flags":

The "No-Train" Promise: Look for a statement that says, "We do not use student data to train our models." This is the gold standard for privacy.

The "Human in the Loop": A safe app doesn't let the AI make big decisions alone. There should always be a way for a teacher or parent to see what the AI is saying.

The "I am a Robot" Disclaimer: Good AI tools for kids will constantly remind them: "I am a computer, not a real person." This prevents kids from sharing deep secrets they should only tell a human.

A "Cheat Sheet" for Your Next Teacher Conference

The next time you talk to your child’s teacher or principal, ask these three simple questions:

"Does the district have a signed privacy agreement with this AI company?" (This ensures the company is legally bound to protect your child.)

"Can I see what my child has discussed with the AI?" (Safe tools usually have a "parent dashboard" or history log.)

"Is this tool 'Age-Gated'?" (Make sure your 10-year-old isn't using a tool built for adults.)

The Bottom Line: Stay Curious, Not Fearful

AI is a powerful tool that can help your child learn faster and more creatively. But just like a swimming pool, it’s only safe if there are fences and a lifeguard.

By asking the right questions, you aren't being "difficult"—you’re being a "digital guardian." You have the right to know where your child’s data goes, and your school has the responsibility to keep that data under lock and key.

Sources and Further Reading for Parents

The Brookings Institution: The Future of Student Privacy in the AI Era.

Common Sense Media: Privacy Ratings for Popular AI Tools.

U.S. Department of Education: A Parent’s Guide to AI and Data Privacy.

MultiState Insider: Tracking 2026 Student Data Privacy Laws.